Neurosymbolic AI 2026

Strategic Design will Determine Outcomes

Background and Update

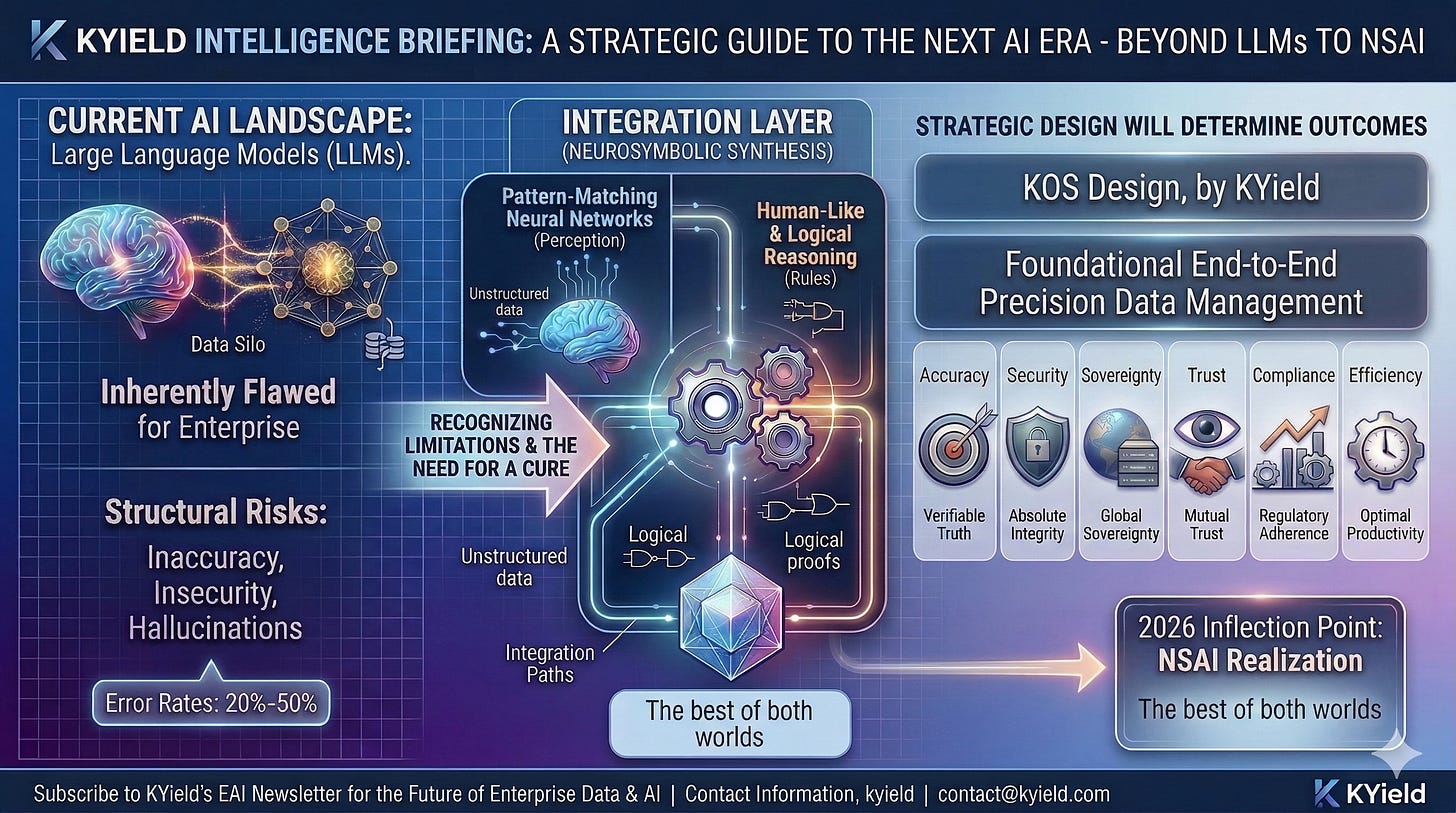

Neurosymbolic AI (NSAI) is a hybrid specialization dating back to about 1980 that bridges the divide between pattern-matching neural networks and rules-based logical reasoning. NSAI has recently attained an inflection point due in part to the maturation of R&D over the preceding decade and to the inherent flaws in large language models (LLMs). The majority of the global economy needs precision, security, and sovereignty, which requires the application of specific types of NSAI systems.

To contextualize the scope and maturity of NSAI research, for the 2015–2016 period, Google Scholar returned 112 resources labeled ‘Neurosymbolic AI’, which substantially increased to 9,050 for the 2025–2026 period. The vast majority of these resources are academic journal articles, with the remainder consisting of preprints published on arXiv and Research Gate, as well as books and conference proceedings. In addition, recent venture capital investment in NSAI has increased significantly, spanning from seed-stage through Series D funding rounds.

Practical implementations are emerging, particularly within safety-critical and regulated industries, such as automated reasoning for complex process optimization in very large-scale networks, such as AWS. Additional companies with substantial investments in NSAI include Google/DeepMind, EY, and Lloyd’s. Numerous other organizations are deploying NSAI within their enterprise for specific applications, typically for analogous reasons.

Common weaknesses of NSAI architecture

They are typically labor intensive due to the need to structure and clean data, build graphs, and design and build logical reasoning models. The labor in this case tends to be highly educated and highly paid professionals, resulting in limited availability only to large organizations.

They are difficult to scale especially compared to LLMs as current LLMs are trained on essentially any type of unstructured data. LLMs are designed for scale, not precision, whereas symbolic AI systems are designed and built for precision. NSAI systems attempt to do both without sacrificing the benefits of either method.

Although much less costly to initially create, train, or operate than LLMs, and can be operated with small data scale, NSAI systems are relatively compute intensive compared to most other types of computing other than LLMs, and require constant supervision by highly trained engineers.

Key advantages of NSAI architecture

Significantly enhanced levels of accuracy

Superior security compared to what is attainable with LLMs

Substantially greater efficiency and reduced operational costs

Strengthened organizational sovereignty

Increased trust with employees, customers, lenders, investors, and regulators

Attainment or surpassing of compliance standards

Access to growth opportunities that are otherwise unattainable

Some NSAI systems have achieved greater than 99% accuracy. While that may sound impressive in comparison to consumer LLMs, in high-volume, safety-critical environments such as a major trauma center, precision manufacturing for aerospace and automobiles, military operations, or financial transactions, anything less than perfect accuracy results in a very substantial cost. As the old saying goes, when I deposit $1,000 in my bank account, I don’t want it reflected as $750, which is about the average accuracy rate of consumer LLMs. And when our loved ones go to the lab for bloodwork, we don’t want hallucinations influencing diagnoses or treatment decisions.

Even beyond safety-critical applications, few businesses can survive with an error rate as high as LLMs. Ask your favorite plumber what customer tolerance levels are for water leaks in houses, as one example. Hence the need for Neurosymbolic hybrid systems like our KOS.

Essential Understanding: Hallucinations are Part of the LLM DNA

Given the persistent reports of professionals, such as attorneys, utilizing consumer-grade LLMs for legal work without adequately confirming the accuracy of court documents, and CEOs advocating the use of consumer LLMs for business operations without a clear understanding of the existential risks posed to their organizations, it is evident that many individuals and organizations remain significantly behind the knowledge curve regarding AI.

The error rate associated with LLMs typically ranges from 20% to 50%. These error rates decrease to below 10% only when the models are deployed in controlled settings, such as enterprise networks, and are frequently enhanced through the application of Retrieval-Augmented Generation (RAG), knowledge graphs (KGs), or a combination thereof (KG-RAG). Nevertheless, while adequate for certain applications, RAG is insufficient for others. For instance, high-profile litigation has resulted in companies being held liable for inaccuracies generated by RAG systems.

The reason is that LLMs are inherently flawed from a security and accuracy perspective, and that’s unlikely to change in the foreseeable future due to technical constraints. In addition, their business models are burdened with perverse incentives that favor insecurity and inaccuracy. While LLMs are a dream-come-true for hyperscalers confronting a scale ceiling (hence record strategic investment), they are a nightmare for many of their customers. Even though LLMs are trained on all of the world’s publicly available data and the companies have invested billions of dollars on training and inference, LLM chatbots lack the physical capacity to answer every question accurately. And if they were programmed to respond honestly to queries with “I don’t know”, consumers would migrate to other bots, hence wide-spread hallucinations.

Firms specializing in LLMs have adopted an extreme form of a “fake-it-till-you-make-it” strategy for a self-proclaimed objective of achieving god-like Artificial General Intelligence (AGI). While this approach may resonate with the insular cultural environment of LLM firms in San Francisco, and apparently constitutes a strong investment thesis for attracting certain types of capital, objective experts have long maintained that it is highly improbable that superintelligence will emerge solely from scaling current models.

I am quite confident that superintelligence of the type promoted by LLM firms will likely remain unattainable unless significant new breakthroughs are achieved, technology vendors secure access to comprehensive global data—including from premier research laboratories in every discipline—or both. This assertion is based on decades of first-hand experience, including in our Synthetic Genius Machine (SGM) R&D. Our decision to pursue a distinct pathway was motivated, in part, by the acknowledged limitations inherent in neural networks, deep learning, and models such as LLMs.

NSAI Design

Adoption trends among major enterprises indicate a sharp increase in proof-of-concepts moving into production, but outcomes hinge entirely on the specificity of the design. For example, while it makes sense for Amazon to employ NSAI for reasoning capabilities across its massive supply chain and logistical operations where even a 10% improvement in costs adds up to many billions of dollars, the specific designs wouldn’t be much help for companies lacking such extreme economies of scale. The strategic rationale that influences system design for a leading cloud provider will be quite different than for the rest of the economy. Hyperscalers are unique in their scale advantage.

In order to survive, everyone else must create and/or deepen other advantages. NSAI designs should therefore be tailored to the strategic and operational needs of each organization and individual. Doing so in an efficient and affordable manner is a nontrivial undertaking, representing our focus for much of the three decades of our R&D.

Neurosymbolic AI is advancing rapidly, yet a significant portion of adoption and potential enterprise value remains constrained by design choices. For CEOs and other C-suite executives, this necessitates a strategic pivot from recruiting AI personnel—many of whom are specialized in LLMs and Generative AI—to securing the expertise required to engineer domain-specific, knowledge-infused systems. This can be achieved through investing in costly, bespoke internal NSAI systems, acquiring sophisticated systems that align with organizational requirements, or, as I expect will become increasingly common, implementing a hybrid approach combining both strategies.

Precise design is not merely an optional feature; it constitutes the foundational architecture for any AI system to deliver dependable, explainable, and trustworthy intelligence at scale. The resulting benefits encompass not only enhanced prediction capabilities, reduced operational costs, and superior ROI, but also improved governance, fortified security, and more robust, defensible competitive differentiation. The capacity to master or procure NSAI systems specifically tailored to organizational needs may well prove decisive in determining the long-term viability of an enterprise.

Our Approach with the KOS

The KOS constitutes the inaugural enterprise-wide AI Operating System, representing the culmination of three decades of dedicated research and development. It is distinguished by its capacity for self-tailoring to each organization and every individual, incorporating robust governance and security measures as foundational design principles from its inception. The digital assistant, DANA, is provided to every employee and integrates sophisticated embedded functionalities, including prescient search, continuous learning, knowledge networks, controllable data valves, captured preventions and emergent opportunities, and seamless integration with LLMs for generative AI and agentic AI applications.

Critical Considerations for CXOs

End-to-End Precision Data Management: It’s difficult to overstate the importance of our precision data management system (DMS) that serves as the core infrastructure for the KOS, but it can be reduced to garbage-in/garbage-out vs. quality-in/quality-out. Everything else in the KOS is built on the DMS. The KOS and DANA are refined, seamlessly integrated, and automated with semi-automated customer controls.

Auditability & Compliance: The KOS is auditable. Every individual in the KOS has access permissions set by the customer’s team, including the ability to run audits and create and manage agents. The system provides the ability to generate compliance reports by showing the logical steps derived from the symbolic layer, making it ideal for regulated industries or any other that needs precision and accountability.

Knowledge Creation & Transfer: The KOS is a human-centric system that institutionalizes deep, domain-specific expertise that might otherwise be lost through staff turnover through the normal daily use of DANA (Digital Assistant with Neuroanatomical Analytics). Our focus on high quality vs high quantity provides a significant boost to productivity. Ultra-tailored content rapidly elevates human potential, workflow, and the quality of AI outputs. Installing and using the KOS creates a CALO (Continuously Adaptive Learning Org). Adoption literally enables almost instant organizational transformation by utilizing state-of-the-art systems and tools.

Captured Preventions & Opportunities: Our three decades of R&D engaging with hundreds of leading organizations wasn’t wasted. A high capture rate for preventions and opportunities requires a very robust system with advanced proprietary technologies designed and integrated in a highly-specific manner. It’s a deep speciality requiring decades of intense work. Our empirical research suggests that this function in the KOS has the capacity to provide unprecedented ROI even in low-risk organizations. ROI from GenAI and agentic AI pale in comparison.

Adoption Bottleneck: The primary challenge in scaling AI is not the underlying technology, but providing trustworthy systems tailored to the needs of each worker in a simple-to-use manner that’s non-threatening, enjoyable, and gets the job done. Attempting to force adoption of poorly designed systems is backfiring. Another bottleneck is organizational capability to define and encode an advanced symbolic knowledge base, hence the importance of our automated DMS. I believe the obsession with LLMs driven by short-term interests is stalling adoption of more responsible AI systems like our KOS that are much more aligned with the interests of individuals and organizations.

Talent Strategy: We provide training and certification for the KOS. The system is focused on human workflow and management of AI systems, including agents, but we don’t attempt to do it all. The system can and should be extended with customer-specific applications developed by internal teams, vendors, and/or consultants. Investing in talent that bridges data science, classical computer science, and human behavior is therefore necessary for large complex organizations. This hybrid skillset can be a new competitive advantage. However, smaller and less complex businesses can get by without specialized talent.

Affordability & Sustainability: The KOS prioritizes high-quality data over scale, resulting in greater efficiency and lower costs compared to custom GenAI systems. Most organizations and individuals require precise accuracy relevant to their specific work, needs, and interests, not boundless access to generalized intelligence. The refined KOS design with high levels of automation is also much less costly than custom NSAI systems. The cost of the KOS is now comparable to traditional enterprise software while delivering far superior functionality and value.

Platform Evaluation: We recommend prioritizing platforms that offer seamless integration of rule-based systems and machine learning, ensuring that the symbolic design is both accessible and adaptable. It’s necessary in this environment to apply aggressive critical thinking to analyze vendor conflicts with their existing products, services, and business models, as those conflicts are dominating most AI system designs. High profile AI systems do not necessarily align with the best interests of customers.

Below is my recorded talk for the leading tech conference in NM in late 2019. It was the first extensive disclosure on the KOS and was followed by similar talks at the IEEE Rising Stars conference in early 2020, for university systems, and major corporations.